-

Posts

14151 -

Joined

-

Last visited

-

Days Won

29

Everything posted by Pocster

-

-

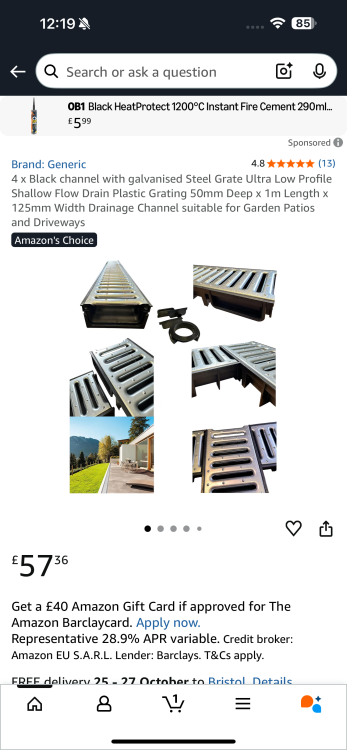

Lots of rain over the last few weeks . Most at night so I don’t “ count it “ . Wee’d down today . Went out to look at my low profile drain . Certainly doing its job ! - small river coming out the other end - draining away from the property . Still can ‘feel’ some moisture inside ( I think ! ) but zero water drops . Paranoia causes me to check the room with the leak a few times everyday…. 🤪

-

We’ve all been there also 👍

-

Saniflo alarm not adequate

Pocster replied to Pocster's topic in Networks, AV, Security & Automation

I’ve got another project using water proof ultra sonic transducers linked to an esp32 - works really well . The device you link to might be worth a punt . Be keen to know how you get on . -

I know what you mean “ Swindon office “ look . It doesn’t have to look like that . It is subjective of course .

-

All done . Decking board just to protect cement from rain . If nothing else it will direct water away from property .

-

A "Man O Meter" ......

-

Where’s the friggin cinema room ??

-

We’ve all been there …

-

Bro - don’t worry . Take your time , buy lots of tools , enjoy the journey.

-

Not me mate. Even my SWMBO ( as you can imagine ) as sceptical before. Not only does it allow easy alterations etc at a later date - it actually looks really good. The problem is as I implied most of these ceilings look like shite - because the edging is shite, they've had leaks/cracks/stains and no one fixes them. They stick a big full tile (expletive deleted)ing horrible led square for a light. I'm actually surprised how 'soft' and unobtrusive it is. Let's be honest though; when you live with something it does 'fade' into the background a bit. I think if you saw my ceiling first hand you would not be overwhelmed with "swindon office" style. I *deliberately* have had people in whom would freely give their opinion - and I know they would say exactly what they think regardless of me - yet instead it's always "where is the tv?" or "I don't like those tiles " - clearly they are looking elsewhere. If you choose the correct ceiling tiles; install with care - so NOT the one's in the gym or Jobcentre ceiling - then you can get away from that polystyrene 'cheap' look. Come and take a look!

-

For that authentic dungeon look!. In your basement it's probably the BEST solution for your ceiling. It allows lighting/speakers to be moved/rewired at will. Makes any cabling and/or pipework from the above floor easily accessible. It's light weight and easy to install. Cheaper than stud/board/skim . BETTER! - not because I did it - but because the pro's outweighs the negatives. Ultimately no-one looks at a ceiling - if you don't copy "the swindon' look i.e. stained tiles, tile size 'office' lights etc etc - it's just a white surface over your head.

-

Just do metal frame for stud and ceiling - like me 😊 . Easy as pee

-

Yeah - I’ll try that . Too slow you lot ! 😊

-

-

Hmmm . I can’t take enough blocks out to get the water to fall away . What about an anco type drain here ? . The 90 at the end important as can get water to run away with the fall there rather than settling at the corner . Good plan / bad plan ?

-

Took out those blocks so the main water escape route is forwards away from the build . Any idea what I could put here ? . A Clark’s drain type cover but with a grill ?

-

So to put it in context ! If you zoom in you can see the green is shiny I.e damp . Yeah I know it’s minimal - but it’s still there . I’ll try my plan out the front for a few months . I know some of you will say “ (expletive deleted) that just seal the concrete in the upstand “ - what concerns me is where the water goes if it can’t get through there …

-

So a plan I had before I’m going to try . Remove blocks on this drawn section . So water can’t pool and run along the wall . A way for it to run off away from the wall .Remember with those 4 courses not in I had zero leaks ! . So it’s water running along the wall base and maybe down the lane the issue ….?

-

Where the cone is now nice toss pot basically put some of my pavers . Can’t possibly have sand on the lane ! Tosser !

-

Noticed damp in the recess . Went outside to look . Only lite rain today . A neighbor ( aka wanker ) had placed some of the cobble stones back at the end presumably to stop the sand washing out . No idea when they did this - but clearly will cause a dam .So clearly still an issue ( can’t say if their block caused the issue but it doesn’t help me ) . Removed blocks and put cone out now ffs . So still an issue - what a bast !

-

Paint what ? The underside of a glazing frame sat on an upstand ?

-

I can’t get to the outside of the foam

-

I did . I posted on here

-

Don’t doubt you . But the spec should say something . I did use a soudal one ( so not cheap brand shite ) . I did just 1 glazed unit - I’ll see how it goes ( as the foam is in ) - if I determine the leak is still over the upstands I.e foams doing jack ; I’ll remove the glass next summer scrape out the foam and redo with your suggested weapon of choice .